AP Syllabus focus: 'A least-squares regression model minimizes the sum of squared residuals, contains the point of means, and estimates the regression-line parameters.'

The least-squares regression line is the formal line of best fit for quantitative data, chosen by a mathematical rule rather than by eye, so predictions come from one consistent linear model.

What the least-squares regression line represents

When two quantitative variables show an approximately linear pattern, statisticians use the least-squares regression line to model that relationship. It is not just any line drawn through a scatterplot. It is the single line chosen by a rule that makes the overall prediction errors as small as possible in a squared sense.

Least-squares regression line: The line that minimizes the sum of the squared residuals for all observations in a data set.

The least-squares regression line is often called the LSRL. In AP Statistics, it is the standard regression line used for linear prediction from an explanatory variable to a response variable.

= predicted response value, in response-variable units

= estimated y-intercept of the regression line

= estimated slope of the regression line

= explanatory-variable value, in explanatory-variable units

This equation describes predicted values, not necessarily the actual observed values. In most real data sets, points do not lie exactly on the line, so the LSRL balances the data instead of matching each observation perfectly.

Because the model is linear, the predicted change from one value of to another follows a straight-line pattern. The line may describe the data well or poorly, but the LSRL is still the line selected by the least-squares rule.

Why the method is called least squares

The key idea is that each observed point has some vertical distance from the regression line.

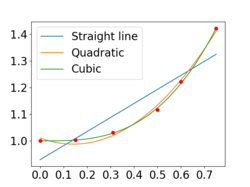

Scatterplot with the least-squares regression line and several residuals drawn as vertical line segments from each observed point to the fitted line. This directly illustrates that residuals are vertical differences (in response-variable units) and motivates why the least-squares criterion focuses on vertical prediction errors. Source

That distance is measured through the residual.

Residual: The difference between an observed response value and the response value predicted by the regression line.

A positive residual means a point lies above the line, and a negative residual means it lies below the line.

= residual for observation , in response-variable units

= observed response value for observation

= predicted response value for observation

= sum of squared residuals for all observations

The LSRL is the line with the smallest possible value of . Squaring matters for two important reasons:

Negative and positive residuals do not cancel each other out.

Larger residuals count more heavily than smaller ones, so points far from the line vertically have more effect on the fit.

Least squares is one criterion among several possible fitting rules, but it gives a definite and reproducible answer. If two people use the same data and the same variables, they will get the same least-squares regression line.

The point of means property

An important property of the LSRL is that it always passes through the point of means.

Point of means: The point , where is the mean of the explanatory-variable values and is the mean of the response-variable values.

This property gives a useful check on regression output. If you know the sample means of and , the least-squares regression line must go through that point. In symbols, when , the predicted value from the LSRL is .

The line does not need to pass through any other actual data point, and in many data sets it passes through none of them exactly. The point of means shows that the fitted line is centered around the average values of the two variables, even though individual observations may be above or below it.

Estimating the regression-line parameters

The LSRL estimates the regression-line parameters, meaning it provides numerical values that determine the fitted line from the sample data. These values control the line’s location and steepness.

In the equation , the two parameters are:

, the estimated y-intercept

, the estimated slope

These are estimates, not fixed values known ahead of time. If the data change, the estimated line usually changes as well. The parameters are chosen together, not separately, because the line must satisfy the least-squares condition as a whole.

In practice, technology is usually used to compute the LSRL because the estimates come from formulas that combine all the data values systematically. On the AP Statistics exam, you are often expected to understand what the regression line represents and what makes it least-squares, even when a calculator or software provides the equation.

What to emphasize for AP Statistics

For this topic, focus on the defining properties of the LSRL rather than treating it as just a calculator output.

It is a linear model for two quantitative variables.

It is chosen by minimizing the sum of squared residuals.

The errors being minimized are vertical prediction errors in the response variable.

The line always passes through .

Its equation uses estimated parameters taken from the sample data.

Observed response values and predicted response values are usually different.

When reading regression output, think of the LSRL as the mathematically defined best line under one exact criterion. The line is special because its coefficients come from that minimizing rule.

FAQ

In simple linear regression, the model is used to predict $y$ from $x$, so the error is measured in the response direction. A residual is therefore a vertical difference between an observed $y$ and a predicted $y$.

Using vertical distances also leads to formulas that are mathematically convenient and widely used in statistics software. If you reversed the roles of the variables, you would usually get a different least-squares line.

There is a unique least-squares regression line as long as the explanatory-variable values are not all the same. The variation in $x$ allows the slope to be determined.

If every observation has the same $x$-value, there is no usable linear regression line of the form $ \hat{y}=a+bx $ because the slope cannot be estimated from a set of identical explanatory values.

Changing units changes the numerical values of the regression coefficients. For example, converting inches to feet or dollars to cents will rescale the slope and may also change the intercept.

However, the fitted relationship stays consistent if the conversion is done correctly. The model is still describing the same underlying pattern; only the numerical form of the equation changes to match the new units.

Squaring makes big deviations grow rapidly. A residual of $2$ contributes $4$ to the total, but a residual of $10$ contributes $100$.

Because of this, observations with large vertical misses can have a strong effect on which line minimizes the total squared error. Least squares does not treat all misses equally; it gives much more weight to the larger ones.

Least squares is popular because it gives a clear rule for choosing a line and produces formulas that can be computed efficiently. That makes it practical for calculators, software, and large data sets.

It is also the foundation for many later statistical methods. Once a line is fitted by least squares, other tools for studying regression are built around that same model.

Practice Questions

[2 marks] A student says, “The best regression line is the one for which the residuals add to 0.” Explain why this does not identify the least-squares regression line, and state the correct criterion for the least-squares regression line.

Explains that a residual sum of 0 does not uniquely determine the line, or that many lines can satisfy that condition. (1)

States that the least-squares regression line is the line that minimizes the sum of squared residuals. (1)

[5 marks] For a data set of two quantitative variables, software reports the least-squares regression line as . The mean explanatory value is and the mean response value is .

(a) Verify that the point of means lies on the regression line.

(b) Explain what it means that this line is “least-squares.”

(c) Identify the regression-line parameters estimated by the model.

(a) Substitutes into the equation and gets . (1)

(a) Concludes that lies on the regression line. (1)

(b) States that among all possible lines, this one has the smallest sum of squared residuals. (1)

(b) Indicates that residuals are the differences between observed and predicted response values, or refers to vertical deviations from the line. (1)

(c) Identifies the parameters as the estimated y-intercept and the estimated slope . (1)